About

Open to Research Collaboration

I work on making AI systems faster and smaller. My research focuses on model compression and hardware-aware optimization — finding the best precision for each layer, and building neural networks that run efficiently on real devices.

Education

Sungkyunkwan University 2023 - 2027

- B.E. in Advanced Semiconductor Engineering 4.2 / 4.5

- B.E. in Systems Management Engineering 4.4 / 4.5

Research

Experience

Undergraduate Researcher

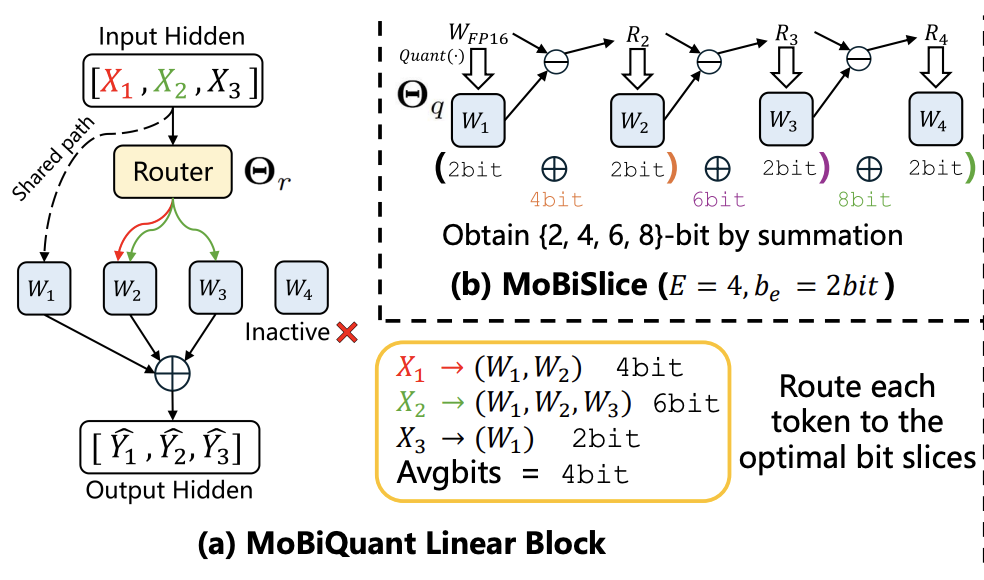

- Precision-scalable neural architectures for compute-efficient inference

- Robust optimization under extreme quantization constraints

AI Software Engineer

- LLM platform with RAG for large-scale enterprise data

- Model quantization and high-performance LLM serving

Software Developer Intern

- Backend development (Java), database architecture

Selected Publications

MoBiQuant: Mixture-of-Bits Quantization for Token-Adaptive Elastic LLMs

Published in arXiv preprint, 2026

* equal contribution, † corresponding author

Recommended citation: https://arxiv.org/pdf/2602.20191

MSQ: Memory-Efficient Bit Sparsification Quantization

Published in ICCV 2025, 2025

* equal contribution, † corresponding author

Recommended citation: https://www.arxiv.org/pdf/2507.22349